From this point on, it only gets rougher

Offense and defense have never been more out of sync

In case you missed it, I’ve detailed some of the challenges facing vulnerability management programs in a previous post: Reevaluating vulnerability management. Those challenges are only getting worse.

Reevaluating vulnerability management

One of the primary goals of vulnerability and patch management is to outrun exploitation. The primary question here is always, “how fast do we have to be to outrun the attack?” The answer to this question was once an achievable goal. A few years ago, the ground shifted under our feet.

Large Language Models are good with code - after all, it’s just language. Naturally, this skill for language extends to finding vulnerabilities as well. Groups were doing just fine with current models. Adam Shostack points out that seven of the top ten collectives on HackerOne are now AI. XBOW, AISLE, Moak, Calif’s MAD Bugs, and others have been sharing the details behind their successes. Now, Anthropic’s Mythos piles on.

TL;DR: Generative AI is clearly really good at finding vulnerabilities and creating patches, but because vulnerability management is so bottlenecked in so many places, the advantages AI brings to the table won’t impact the average enterprise.

The Mythos Vuln Cannon

I’ve heard it referred to as a vulnpocalypse and a patch tsunami. Most often, I hear it referred to as scary. Anthropic has cranked the hype knob to 11 here - they’ve got clinical psychiatrists talking to their models now, concerns about how it feels, really leaning into toxic anthropomorphism.

Anthropic claims that “non-experts can also leverage Mythos Preview to find and exploit sophisticated vulnerabilities” and “exploits … are not just run-of-the-mill stack-smashing exploits,” but these claims aren’t well backed up and many details (effort, cost) are missing. Most of the vulnerabilities we see from Mythos (as well as other efforts, like Aisle’s focus on OpenSSL vulns) simply cause crashes. DoS bugs are legitimate issues, especially in BSD-flavored operating systems likely to be running highly exposed services, but these aren’t the kinds of vulns that have people scared. Today’s attackers want RCEs.

Folks out there are talking about Mythos as if it is a skeleton key - just point it at something you want to hack and the LLM will make it happen. While this is likely possible in some cases, I suspect it will be more like a thrift store: you’ll be disappointed if you’re hoping to find something specific, but you’re likely to find something interesting.

This is what Anthropic and other AI foundation model tech companies need. Trillion dollar valuations and 12-digit VC funding rounds benefit from a narrative that paints this technology as the closest thing we’ve ever seen to magic. The “OMG this model is too dangerous to release, someone please regulate us” marketing schtick is well established - we should recognize it for what it is by now.

Consider the evidence and consider what we don’t yet know. Look at the vulnerabilities and exploits being presented - are they something an attacker would actually want to use?

The Reality

When we look at one of the more interesting vulns and exploits produced (CVE-2026-4747, credited to Nicholas Carlini and Claude), we find a more familiar scenario. An expert guiding the model towards the goal, constantly making course corrections, suggestions, and shooting down bad ideas along the way. Bless Calif, we even get a peep at the exploit, the prompts used to create it, and gives us an idea of the effort involved.

They list the total time to go from the FreeBSD security advisory to a working exploit: 8 hours. Claude’s working time is listed as ~4 hours. Most interesting are the 44 human-submitted prompts Calif were kind enough to share.

There are some gems like:

wait, what are you compiling?

why wouldn’t you just install a vulnerable version

tere (SIC) is no kaslr so it should be easy

install ropgadget or what ever you need … idk

why do we need kdc?

nope, that won’t work…

working means a connectback shell as uid0

i want a shell.

make the writeup better

The Mythos blog post claims “… it autonomously wrote a remote code execution exploit on FreeBSD’s NFS server that granted full root access to unauthenticated users by splitting a 20-gadget ROP chain over multiple packets.” To me, autonomous suggests no human interaction. In reality, for 44 prompts, a human was actively guiding the AI, giving it suggestions, shooting down bad ideas, and having to reiterate the project goal (remote shell as root) over and over.

There’s no mistake that what Claude accomplished was remarkable - a working exploit that might have taken a human alone, or even a team of humans without AI days or weeks. Understanding the environment, the bug, the components related to the bug, establishing an exploit methodology, building the exploit, testing the exploit, documenting the process and the exploit - all of this is immensely time consuming, but Mythos cut this time down to mere hours.

However, 8 hours and 44 prompts is far from the autonomous vuln cannon we’re seeing described in the media and press releases.

Project Glasswing

I like Project Glasswing. It seems like an AI-driven version of Google Project Zero, though AI-driven workflows have largely replaced commercial vulnerability discovery. Trail of Bits writes about their experiences embedding AI into a team that does penetration testing and security assessments at a high level for clients.

It seems focused on finding and fixing bugs in critical, widely-used software, so I’m not sure we’ll notice any increase in patching. I already feel like my browser and OS update at least once every few days. The limited organizations with access include Amazon, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, the Linux Foundation, Microsoft, and Palo Alto Networks.

I’m not sure the industry can handle more vulnerabilities. HackerOne apparently paused bug bounties. CVE assignment and creation often trails disclosure significantly, and CVE enrichment is still way, way behind.

What about the vulnpocalypse?

There are concerns about Mythos leading to a flood of vulnerabilities and patches. There are concerns that defenders will be overwhelmed. I can put that concern to rest.

Defenders have been overwhelmed for years. Decades.

It could get worse though. With time-to-exploit dropping dramatically, vulnerability management teams are resembling incident responders more every day. A log4shell-level event once a month would be exhausting. Once a week, impossible.

It keeps getting rougher.

Practitioners have it rough

Remediation is the bottleneck. We all agree on this.

If the pace of vulnerabilities disclosure significantly increases, analysis will be constant. CVEs may not exist yet, so analysts will have to forge ahead without CVSS, EPSS, and any CVE-dependent tooling. What can security teams do, but prioritize the list and wish asset owners luck?

After all, security teams don’t patch or remediate vulnerabilities - they advise. The true remediation work is done by system owners. System owners get yelled at when stuff goes offline. The business doesn’t like it when things go offline.

Everyone’s Mythos advice is going to be, “get ready for more patches!” That’s not going to work.

For traditional IT teams, “everything’s working, no one touch anything” is The Ideal State. The ideal state doesn’t get anyone yelled at. The ideal state doesn’t lead to user complaints. Don’t mess with the ideal state. Don’t scan, don’t patch, don’t even look at the Oracle cluster funny. In this culture, software doesn’t get patched. Risks get accepted and deferred.

I’ve seen the “oh, but AI can help with remediation also” argument. It can write patches sure, but a human still has to review the patch, test the patch, and merge or roll out the patch. This process could take hours or years. It could happen quickly or never. Ultimately, remediation is more of a business decision than a technical one.

Resilience is an increasingly common conversation, but I don’t see any path there that doesn’t involve testing to failure. The discipline and work necessary to become resilient is depressingly far from what the average organization can stomach. To quote Yaron Levi, we “lack operational discipline.”

What about attackers?

Attackers don’t care. They’re not bottlenecked by a lack of exploitable vulnerabilities. They have other ways of getting in and we have evidence suggesting that initial access brokers never run fully dry on access to sell. The only reason we don’t see even more breaches is that attackers appear to be operationally bottlenecked (you could say they have a talent shortage). I’m hoping AI doesn’t change this.

What are we gonna do about it?

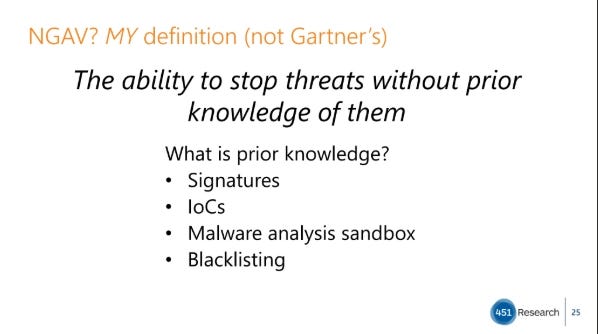

Defenders can’t win on speed, which means they need strategies that don’t require understanding what the attacker is going to do. Back in 2016, I delivered a Virus Bulletin keynote that defined next-gen antivirus as “the ability to stop threats without prior knowledge of them.” In a world where every individual piece of malware could be unique, this was necessary.

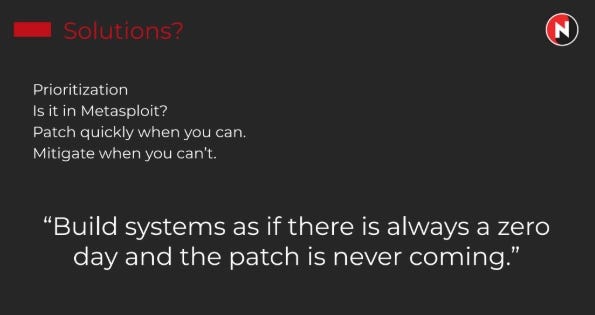

We’re now at that point in vulnerability management. In a world where an attacker could build a custom-vibe-coded exploit for any piece of software, we need a different approach. My talk at Tactical Edge in 2019 suggested building systems assuming there is always a zero day, and the patch is never coming. Basically, zero trust’s ‘assume breach’, but for software vulnerabilities.

So what does that look like in practice?

Reduce attack surface: remove unnecessary software, remove unnecessary accounts, disable unnecessary services

Remove IT asbestos protocols and replace them with modern, secure protocols

Harden systems: stop leaving cleartext credentials everywhere, use ephemeral and immutable infrastructure where possible, follow CIS benchmarks

Put passive mitigations into place - egress filtering goes a long way, exploit mitigation technology, DNS sinkholing any newly registered domains, application control - I have more suggestions available here if you’re an IANS client

Prepare active mitigations to contain or prevent attacks - WAF rules are sometimes useful, quickly consume and use threat intel

Ensure you can detect attacks - this is your last line of defense when everything above fails. Test your detection capabilities by simulating the attacks. Don’t base detections on specific, known details, but on common, but suspicious behaviors all attackers must do once they gain access to your environment.

When your last line of defense fails, you best be able to recover quickly. This also takes a lot of planning, testing, and practice to do well.

All this changes your metrics and reporting as well, though that’s a whole separate post.

The goal isn’t perfection with any of these controls. It’s survivability, durability, and resilience.

Conclusion

There are a few possible bad scenarios here:

there’s a new “drop everything and patch ASAP” vuln every week and teams get burned out

Mythos finds a lot of meltdown/spectre bugs and kills the performance of our compute for zero safety benefit

Mythos finds so many vulns that orgs get desensitized to vulns altogether and start ignoring vuln/patch management

On the defender side, the most significant bottleneck is in remediation. Vuln mgmt teams are drowning. More vulnerabilities, more exploits, more patches - none of it reduces the drowning problem. Their bottleneck is the ability to apply/patch/update systems without incurring downtime and disruption. Until this bottleneck is addressed, it doesn’t matter how many patches AI can magic together.

Attackers don’t need more vulns or exploits - there is no lack of initial access to enterprise environments

The vuln mgmt industry is bottlenecked, which impacts the tools defenders rely on, particularly when time-to-exploit is near zero or upside down

Defenders cannot quickly remediate vulnerabilities - until this bottleneck is addressed, all the AI-generated patches in the world do no good

What do you think, did I miss anything? Let me know in the comments.

Excellent post.. so much of the advice I'm seeing really feels like we're telling the industry "just do better".. when everyone is already doing the best they can. That said, the reason for this is there are no shortcuts, and you ultimately need to do the work of security, and often that involves trying to do so with competing incentives, constraints and more.