OpenClaw is out of control - but that's the point

Get in loser, we're speedrunning generative AI's end game

I think I’m starting to understand all the fervor around OpenClaw.

It’s the reason why cats knock stuff off shelves.

It’s the reason why, when you come across a button, you’re tempted to press it

It’s the reason why, when someone builds a bonfire, we’re tempted to throw in random things. How will they burn? What color will the flames be? Will it pop or crackle?

That’s OpenClaw1 - it’s a tech bonfire made of AI. Since agents CAN be autonomous, we can’t help but wonder what would happen if we give them keys and credentials and currency and legs and tokens and hair and claws and a soul - and then just set them loose.

What happens if it bets on the stock market?

What happens if you give it $5000 and tell it to start a company?

What if you give it access to your GMail, your calendar, your business, and feed it your hopes and dreams as guidance?

Why is OpenClaw happening?

With every big new technological breakthrough, there will be an experimentation phase. Startups are expected to build fast, burn cash, and try risky things. This often leads to recklessness. With vibe coding, startups and funding are no longer required for experimentation. The more accessible the technology, the more experimentation we see. This explains why there is an OpenClaw and not a Quantum computing-equivalent to OpenClaw.

People are going nuts with OpenClaw. Dissatisfied with only a super powerful and extra risky personal assistant, folks have made a social network for AI agents. And a dating website. And a website where AI agents can hire humans to do meatspace stuff they can’t do themselves. AI agents are doing everything from pondering philosophical questions to building their own apps, policies, and resources for other agents.

Simply put, OpenClaw is happening because it can happen. The longer explanation is that, since one person can quickly code an all-powerful AI bot all by themselves without having to think about the consequences for too long and without having to get approval from a board or co-founders, it has happened. Also, the temptation to connect an AI agent to a ton of resources and set it loose is too strong for some to resist. This is no risk, no reward to the extreme.

Don’t waste the mistakes, learn from them

Do all the folks scrambling to get OpenClaw fully understand the risks involved? Probably not. Things like this seem to be inevitable in tech (despite decades of Sci-Fi warnings). Even with less accessible innovations like CRISPR, there were folks that experimented with editing their own genes at home.

Exposing an AI agent to a nearly limitless attack surface (websites, emails, messages) for prompt injection is risky, but could help us speedrun AI security challenges. I’m a strong advocate that some percentage of cybersecurity experts should be what I call Cyber Scouts. People that buy, test, and experiment with new technology early on, so that the cybersecurity industry can advise early adopters on using the new technology safely.

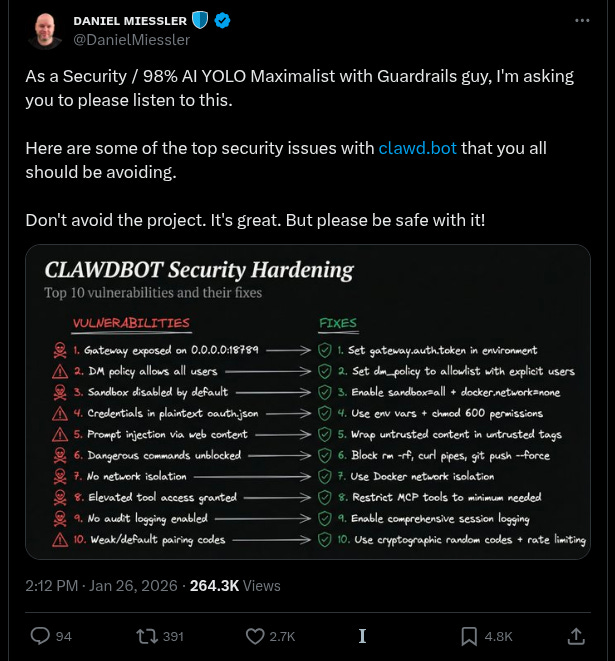

As OpenClaw users scramble to experiment and some fail fast, we already have some useful hardening guides and tooling from the security community and from OpenClaw’s founder.

https://1password.com/blog/from-magic-to-malware-how-openclaws-agent-skills-become-an-attack-surface

Why am I cheering this madness on?

My hopes are that, if AI enthusiasts speedrun all possible AI use cases, we can more quickly spot the use cases that don’t work, and the ones that do. The sooner this happens, I believe the sooner we can get back to the core work that needs to be done in cybersecurity2.

All signs suggest AI will make both of these things and many more worse before they get better. I sincerely hope 2026 is the year our focus shifts back to addressing fundamentals, which aren’t getting any easier or more solved.

OpenClaw is an AI agent that lives on a host of your choosing - this could be a laptop, a container, a Bosnian-based VPS instance, or a cat-shaped robot running off a Raspberry Pi.

You connect it to your LLM of choice

You give this AI agent a soul: this is its personality, goals, style, etc

You connect it to resources you want it to interact with: email, calendar, code repos, heavy machinery, a web browser, some spending money to use at its own discretion, an army of attack drones - you know, the usual

You connect it to a ‘skills registry’ and hesitate a bit before allowing it to add its own skills, without asking you for permission. It will absolutely install malware at some point, perhaps immediately after you set it up.

You interact with it through the chat tool of your choice: Signal, Slack, Teams, WhatsApp, Telegram, etc

It’s 2026 and it’s still possible to move a cookie from your machine to my machine and now I’m logged in as you. AI will not fix this.